本文发布于Cylon的收藏册,转载请著名原文链接~

前提概述

kubernetes集群中运行在每个Worker节点上的组件 kube-proxy,本文讲解的是如何快速的了解 kube-proxy 的软件架构,而不是流程的分析,专注于 proxy 层面的设计讲解,而不会贴大量的代码

kube-proxy软件设计

kube-proxy 在设计上分为三个模块 server 于 proxy:

- server: 是一个常驻进程用于处理service的事件

- proxy: 是 kube-proxy 的工作核心,实际上的角色是一个 service controller,通过监听 node, service, endpoint 而生成规则

- proxier: 是实现service的组件,例如iptables, ipvs….

如何快速读懂kube-proxy源码

要想快速读懂 kube-proxy 源码就需要对 kube-proxy 设计有深刻的了解,例如需要看 kube-proxy 的实现,我们就可以看 proxy的部分,下列是 proxy 部分的目录结构

$ tree -L 1

.

├── BUILD

├── OWNERS

├── apis

├── config

├── healthcheck

├── iptables

├── ipvs

├── metaproxier

├── metrics

├── userspace

├── util

├── winuserspace

├── winkernel

├── doc.go

├── endpoints.go

├── endpoints_test.go

├── endpointslicecache.go

├── endpointslicecache_test.go

├── service.go

├── service_test.go

├── topology.go

├── topology_test.go

└── types.go

- 目录 ipvs, iptables, 就是所知的 kube-proxy 提供的两种 load balancer

- 目录 apis, 则是kube-proxy 配置文件资源类型的定义,

--config=/etc/kubernetes/kube-proxy-config.yaml所指定问题的shema - 目录config: 定义了每种资源的handler需要实现什么

- service.go, endpoints.go:是controller的实现

- type.go: 是每个资源的interface定义,例如:

- Provider: 规定了每个proxier需要实现什么

- ServicePort: service 控制器需要实现什么

- Endpoint: service 控制器需要实现什么

上述是整个 proxy 的一级结构

service controller

service控制器换句话说,就是工作内容类似于kubernetes集群中的pod控制器那些,所作的工作就是监听对应资源做出相应事件处理,而这个处理被定义为 handler

type Provider interface {

config.EndpointsHandler

config.EndpointSliceHandler

config.ServiceHandler

config.NodeHandler

// Sync immediately synchronizes the Provider's current state to proxy rules.

Sync()

// SyncLoop runs periodic work.

// This is expected to run as a goroutine or as the main loop of the app.

// It does not return.

SyncLoop()

}

由上面代码可以看到,每一个Provider 即 proxier (用于实现的LB的控制器)都需要包含对应资源的事件处理函数于 一个 Sync() 和 SyncLoop(),所以这里将总结为 controller 而不是用于这里给到的术语

同理,其他类型的 controller 则是相同与 service controller

深入理解proxier

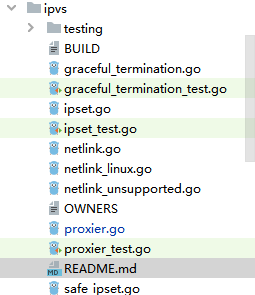

这里将以 ipvs 为例,如图所示,这将是一个 proxier 的实现,而 proxier.go 则是真实的 proxier 实现

而 ipvs 的 proxier 则是如下定义的

type Proxier struct {

// endpointsChanges and serviceChanges contains all changes to endpoints and

// services that happened since last syncProxyRules call. For a single object,

// changes are accumulated, i.e. previous is state from before all of them,

// current is state after applying all of those.

endpointsChanges *proxy.EndpointChangeTracker

serviceChanges *proxy.ServiceChangeTracker

mu sync.Mutex // protects the following fields

serviceMap proxy.ServiceMap

endpointsMap proxy.EndpointsMap

portsMap map[utilproxy.LocalPort]utilproxy.Closeable

nodeLabels map[string]string

// endpointsSynced, endpointSlicesSynced, and servicesSynced are set to true when

// corresponding objects are synced after startup. This is used to avoid updating

// ipvs rules with some partial data after kube-proxy restart.

endpointsSynced bool

endpointSlicesSynced bool

servicesSynced bool

initialized int32

syncRunner *async.BoundedFrequencyRunner // governs calls to syncProxyRules

// These are effectively const and do not need the mutex to be held.

syncPeriod time.Duration

minSyncPeriod time.Duration

// Values are CIDR's to exclude when cleaning up IPVS rules.

excludeCIDRs []*net.IPNet

// Set to true to set sysctls arp_ignore and arp_announce

strictARP bool

iptables utiliptables.Interface

ipvs utilipvs.Interface

ipset utilipset.Interface

exec utilexec.Interface

masqueradeAll bool

masqueradeMark string

localDetector proxyutiliptables.LocalTrafficDetector

hostname string

nodeIP net.IP

portMapper utilproxy.PortOpener

recorder record.EventRecorder

serviceHealthServer healthcheck.ServiceHealthServer

healthzServer healthcheck.ProxierHealthUpdater

ipvsScheduler string

// Added as a member to the struct to allow injection for testing.

ipGetter IPGetter

// The following buffers are used to reuse memory and avoid allocations

// that are significantly impacting performance.

iptablesData *bytes.Buffer

filterChainsData *bytes.Buffer

natChains *bytes.Buffer

filterChains *bytes.Buffer

natRules *bytes.Buffer

filterRules *bytes.Buffer

// Added as a member to the struct to allow injection for testing.

netlinkHandle NetLinkHandle

// ipsetList is the list of ipsets that ipvs proxier used.

ipsetList map[string]*IPSet

// Values are as a parameter to select the interfaces which nodeport works.

nodePortAddresses []string

// networkInterfacer defines an interface for several net library functions.

// Inject for test purpose.

networkInterfacer utilproxy.NetworkInterfacer

gracefuldeleteManager *GracefulTerminationManager

}

再看这个结构体的 structure,发现他实现了上述提到的 Handler 和 Sync()

可以看到 Sync() 的实现是调用 runner.Run()

func (proxier *Proxier) Sync() {

if proxier.healthzServer != nil {

proxier.healthzServer.QueuedUpdate()

}

metrics.SyncProxyRulesLastQueuedTimestamp.SetToCurrentTime()

proxier.syncRunner.Run()

}

而 handler 中的任意事件的触发则是调用 Sync()

// handler 不同的事件均指向 On{rs}Update() 函数

func (proxier *Proxier) OnEndpointsAdd(endpoints *v1.Endpoints) {

proxier.OnEndpointsUpdate(nil, endpoints)

}

// OnEndpointsDelete is called whenever deletion of an existing endpoints object is observed.

func (proxier *Proxier) OnEndpointsDelete(endpoints *v1.Endpoints) {

proxier.OnEndpointsUpdate(endpoints, nil)

}

...

// 而 update 调用的则是 Sync()

func (proxier *Proxier) OnEndpointsUpdate(oldEndpoints, endpoints *v1.Endpoints) {

if proxier.endpointsChanges.Update(oldEndpoints, endpoints) && proxier.isInitialized() {

proxier.Sync()

}

}

到了这里,明了了 runner 才是这个 proxier 的核心,被定义于 proxier 结构图的 syncRunner 在初始化时被注入了函数 syncProxyRules()

func NewProxier(ipt utiliptables.Interface,

...

proxier.syncRunner = async.NewBoundedFrequencyRunner("sync-runner", proxier.syncProxyRules, minSyncPeriod, syncPeriod, burstSyncs)

proxier.gracefuldeleteManager.Run()

return proxier, nil

}

syncProxyRules()

而这个 syncProxyRules() 则是完成了整个 ipvs 以及 service 的生命周期

对于了解kubernetes架构的同学来说,kube-proxy 完成的功能就是 ipvs 的规则管理,那么换句话说就是干预 ipvs 规则的生命周期,也就是分析函数 syncProxyRules() 是如何干预这些规则的。

syncProxyRules() 将动作分为两部分,一是对 ipvs 资源的增改,二是对资源的销毁;引入完概念后,就开始进行分析吧。

600多行的代码看起来很困难,那就拆分成步骤进行分析

step1 前期准备工作

为什么这么叫第一部分呢?看下列代码就知道,做的工作和 ipvs rules 没多大关系

func (proxier *Proxier) syncProxyRules() {

// 互斥锁

proxier.mu.Lock()

defer proxier.mu.Unlock()

// don't sync rules till we've received services and endpoints

// 在等待接收完信息前,不同步收到的 services和endpoints资源

if !proxier.isInitialized() {

klog.V(2).Info("Not syncing ipvs rules until Services and Endpoints have been received from master")

return

}

// Keep track of how long syncs take.

// 记录同步耗时

start := time.Now()

defer func() {

metrics.SyncProxyRulesLatency.Observe(metrics.SinceInSeconds(start))

klog.V(4).Infof("syncProxyRules took %v", time.Since(start))

}()

// 获取本地多个IP地址

localAddrs, err := utilproxy.GetLocalAddrs()

if err != nil {

klog.Errorf("Failed to get local addresses during proxy sync: %v, assuming external IPs are not local", err)

} else if len(localAddrs) == 0 {

klog.Warning("No local addresses found, assuming all external IPs are not local")

}

localAddrSet := utilnet.IPSet{}

localAddrSet.Insert(localAddrs...)

// We assume that if this was called, we really want to sync them,

// even if nothing changed in the meantime. In other words, callers are

// responsible for detecting no-op changes and not calling this function.

// 这两步骤正如注释所讲,如果在资源修改的前提下需要同步,也就是update操作包含了增改

serviceUpdateResult := proxy.UpdateServiceMap(proxier.serviceMap, proxier.serviceChanges)

endpointUpdateResult := proxier.endpointsMap.Update(proxier.endpointsChanges)

// 陈腐的UDP信息处理

staleServices := serviceUpdateResult.UDPStaleClusterIP

// merge stale services gathered from updateEndpointsMap

for _, svcPortName := range endpointUpdateResult.StaleServiceNames {

if svcInfo, ok := proxier.serviceMap[svcPortName]; ok && svcInfo != nil && conntrack.IsClearConntrackNeeded(svcInfo.Protocol()) {

klog.V(2).Infof("Stale %s service %v -> %s", strings.ToLower(string(svcInfo.Protocol())), svcPortName, svcInfo.ClusterIP().String())

staleServices.Insert(svcInfo.ClusterIP().String())

for _, extIP := range svcInfo.ExternalIPStrings() {

staleServices.Insert(extIP)

}

}

}

step2:构建IPVS规则

首先会经历一些预处理的操作 这部分掠过了 L1042-L1140

klog.V(3).Infof("Syncing ipvs Proxier rules")

// Begin install iptables

// Reset all buffers used later.

// This is to avoid memory reallocations and thus improve performance.

proxier.natChains.Reset()

proxier.natRules.Reset()

proxier.filterChains.Reset()

proxier.filterRules.Reset()

// Write table headers.

writeLine(proxier.filterChains, "*filter")

writeLine(proxier.natChains, "*nat")

proxier.createAndLinkeKubeChain()

// make sure dummy interface exists in the system where ipvs Proxier will bind service address on it

_, err = proxier.netlinkHandle.EnsureDummyDevice(DefaultDummyDevice)

if err != nil {

klog.Errorf("Failed to create dummy interface: %s, error: %v", DefaultDummyDevice, err)

return

}

// make sure ip sets exists in the system.

for _, set := range proxier.ipsetList {

if err := ensureIPSet(set); err != nil {

return

}

set.resetEntries()

}

// Accumulate the set of local ports that we will be holding open once this update is complete

replacementPortsMap := map[utilproxy.LocalPort]utilproxy.Closeable{}

// activeIPVSServices represents IPVS service successfully created in this round of sync

activeIPVSServices := map[string]bool{}

// currentIPVSServices represent IPVS services listed from the system

currentIPVSServices := make(map[string]*utilipvs.VirtualServer)

// activeBindAddrs represents ip address successfully bind to DefaultDummyDevice in this round of sync

activeBindAddrs := map[string]bool{}

bindedAddresses, err := proxier.ipGetter.BindedIPs()

if err != nil {

klog.Errorf("error listing addresses binded to dummy interface, error: %v", err)

}

// 检查是否是nodeport类型

hasNodePort := false

for _, svc := range proxier.serviceMap {

svcInfo, ok := svc.(*serviceInfo)

if ok && svcInfo.NodePort() != 0 {

hasNodePort = true

break

}

}

// Both nodeAddresses and nodeIPs can be reused for all nodePort services

// and only need to be computed if we have at least one nodePort service.

var (

// List of node addresses to listen on if a nodePort is set.

nodeAddresses []string

// List of node IP addresses to be used as IPVS services if nodePort is set.

nodeIPs []net.IP

)

if hasNodePort {

nodeAddrSet, err := utilproxy.GetNodeAddresses(proxier.nodePortAddresses, proxier.networkInterfacer)

if err != nil {

klog.Errorf("Failed to get node ip address matching nodeport cidr: %v", err)

} else {

nodeAddresses = nodeAddrSet.List()

for _, address := range nodeAddresses {

if utilproxy.IsZeroCIDR(address) {

nodeIPs, err = proxier.ipGetter.NodeIPs()

if err != nil {

klog.Errorf("Failed to list all node IPs from host, err: %v", err)

}

break

}

nodeIPs = append(nodeIPs, net.ParseIP(address))

}

}

}

接下来是整个构建ipvs规则的关键部分,大概200-300行代码,通过循环 serviceMap 拿到每一个 service 的信息,然后在通过 循环 endpointsMap[svcName] 得到每个 service下所属的 endpoint ,然后构建 ipvs 的规则

L1141-L1542 这里也包含了 nodeport, clusterIP, ingress等不同的service类型

// Build IPVS rules for each service.

for svcName, svc := range proxier.serviceMap {

svcInfo, ok := svc.(*serviceInfo)

if !ok {

klog.Errorf("Failed to cast serviceInfo %q", svcName.String())

continue

}

isIPv6 := utilnet.IsIPv6(svcInfo.ClusterIP())

protocol := strings.ToLower(string(svcInfo.Protocol()))

// Precompute svcNameString; with many services the many calls

// to ServicePortName.String() show up in CPU profiles.

svcNameString := svcName.String()

// 循环endpoint

// Handle traffic that loops back to the originator with SNAT.

for _, e := range proxier.endpointsMap[svcName] {

ep, ok := e.(*proxy.BaseEndpointInfo)

if !ok {

klog.Errorf("Failed to cast BaseEndpointInfo %q", e.String())

continue

}

if !ep.IsLocal {

continue

}

epIP := ep.IP()

epPort, err := ep.Port()

// Error parsing this endpoint has been logged. Skip to next endpoint.

if epIP == "" || err != nil {

continue

}

entry := &utilipset.Entry{

IP: epIP,

Port: epPort,

Protocol: protocol,

IP2: epIP,

SetType: utilipset.HashIPPortIP,

}

if valid := proxier.ipsetList[kubeLoopBackIPSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoopBackIPSet].Name))

continue

}

proxier.ipsetList[kubeLoopBackIPSet].activeEntries.Insert(entry.String())

}

// Capture the clusterIP.

// ipset call

entry := &utilipset.Entry{

IP: svcInfo.ClusterIP().String(),

Port: svcInfo.Port(),

Protocol: protocol,

SetType: utilipset.HashIPPort,

}

// add service Cluster IP:Port to kubeServiceAccess ip set for the purpose of solving hairpin.

// proxier.kubeServiceAccessSet.activeEntries.Insert(entry.String())

if valid := proxier.ipsetList[kubeClusterIPSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeClusterIPSet].Name))

continue

}

proxier.ipsetList[kubeClusterIPSet].activeEntries.Insert(entry.String())

// ipvs call

serv := &utilipvs.VirtualServer{

Address: svcInfo.ClusterIP(),

Port: uint16(svcInfo.Port()),

Protocol: string(svcInfo.Protocol()),

Scheduler: proxier.ipvsScheduler,

}

// Set session affinity flag and timeout for IPVS service

if svcInfo.SessionAffinityType() == v1.ServiceAffinityClientIP {

serv.Flags |= utilipvs.FlagPersistent

serv.Timeout = uint32(svcInfo.StickyMaxAgeSeconds())

}

// We need to bind ClusterIP to dummy interface, so set `bindAddr` parameter to `true` in syncService()

if err := proxier.syncService(svcNameString, serv, true, bindedAddresses); err == nil {

activeIPVSServices[serv.String()] = true

activeBindAddrs[serv.Address.String()] = true

// ExternalTrafficPolicy only works for NodePort and external LB traffic, does not affect ClusterIP

// So we still need clusterIP rules in onlyNodeLocalEndpoints mode.

if err := proxier.syncEndpoint(svcName, false, serv); err != nil {

klog.Errorf("Failed to sync endpoint for service: %v, err: %v", serv, err)

}

} else {

klog.Errorf("Failed to sync service: %v, err: %v", serv, err)

}

// Capture externalIPs.

for _, externalIP := range svcInfo.ExternalIPStrings() {

// If the "external" IP happens to be an IP that is local to this

// machine, hold the local port open so no other process can open it

// (because the socket might open but it would never work).

if (svcInfo.Protocol() != v1.ProtocolSCTP) && localAddrSet.Has(net.ParseIP(externalIP)) {

// We do not start listening on SCTP ports, according to our agreement in the SCTP support KEP

lp := utilproxy.LocalPort{

Description: "externalIP for " + svcNameString,

IP: externalIP,

Port: svcInfo.Port(),

Protocol: protocol,

}

if proxier.portsMap[lp] != nil {

klog.V(4).Infof("Port %s was open before and is still needed", lp.String())

replacementPortsMap[lp] = proxier.portsMap[lp]

} else {

socket, err := proxier.portMapper.OpenLocalPort(&lp, isIPv6)

if err != nil {

msg := fmt.Sprintf("can't open %s, skipping this externalIP: %v", lp.String(), err)

proxier.recorder.Eventf(

&v1.ObjectReference{

Kind: "Node",

Name: proxier.hostname,

UID: types.UID(proxier.hostname),

Namespace: "",

}, v1.EventTypeWarning, err.Error(), msg)

klog.Error(msg)

continue

}

replacementPortsMap[lp] = socket

}

} // We're holding the port, so it's OK to install IPVS rules.

// ipset call

entry := &utilipset.Entry{

IP: externalIP,

Port: svcInfo.Port(),

Protocol: protocol,

SetType: utilipset.HashIPPort,

}

if utilfeature.DefaultFeatureGate.Enabled(features.ExternalPolicyForExternalIP) && svcInfo.OnlyNodeLocalEndpoints() {

if valid := proxier.ipsetList[kubeExternalIPLocalSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeExternalIPLocalSet].Name))

continue

}

proxier.ipsetList[kubeExternalIPLocalSet].activeEntries.Insert(entry.String())

} else {

// We have to SNAT packets to external IPs.

if valid := proxier.ipsetList[kubeExternalIPSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeExternalIPSet].Name))

continue

}

proxier.ipsetList[kubeExternalIPSet].activeEntries.Insert(entry.String())

}

// ipvs call

serv := &utilipvs.VirtualServer{

Address: net.ParseIP(externalIP),

Port: uint16(svcInfo.Port()),

Protocol: string(svcInfo.Protocol()),

Scheduler: proxier.ipvsScheduler,

}

if svcInfo.SessionAffinityType() == v1.ServiceAffinityClientIP {

serv.Flags |= utilipvs.FlagPersistent

serv.Timeout = uint32(svcInfo.StickyMaxAgeSeconds())

}

if err := proxier.syncService(svcNameString, serv, true, bindedAddresses); err == nil {

activeIPVSServices[serv.String()] = true

activeBindAddrs[serv.Address.String()] = true

onlyNodeLocalEndpoints := false

if utilfeature.DefaultFeatureGate.Enabled(features.ExternalPolicyForExternalIP) {

onlyNodeLocalEndpoints = svcInfo.OnlyNodeLocalEndpoints()

}

if err := proxier.syncEndpoint(svcName, onlyNodeLocalEndpoints, serv); err != nil {

klog.Errorf("Failed to sync endpoint for service: %v, err: %v", serv, err)

}

} else {

klog.Errorf("Failed to sync service: %v, err: %v", serv, err)

}

}

// Capture load-balancer ingress.

for _, ingress := range svcInfo.LoadBalancerIPStrings() {

if ingress != "" {

// ipset call

entry = &utilipset.Entry{

IP: ingress,

Port: svcInfo.Port(),

Protocol: protocol,

SetType: utilipset.HashIPPort,

}

// add service load balancer ingressIP:Port to kubeServiceAccess ip set for the purpose of solving hairpin.

// proxier.kubeServiceAccessSet.activeEntries.Insert(entry.String())

// If we are proxying globally, we need to masquerade in case we cross nodes.

// If we are proxying only locally, we can retain the source IP.

if valid := proxier.ipsetList[kubeLoadBalancerSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoadBalancerSet].Name))

continue

}

proxier.ipsetList[kubeLoadBalancerSet].activeEntries.Insert(entry.String())

// insert loadbalancer entry to lbIngressLocalSet if service externaltrafficpolicy=local

if svcInfo.OnlyNodeLocalEndpoints() {

if valid := proxier.ipsetList[kubeLoadBalancerLocalSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoadBalancerLocalSet].Name))

continue

}

proxier.ipsetList[kubeLoadBalancerLocalSet].activeEntries.Insert(entry.String())

}

if len(svcInfo.LoadBalancerSourceRanges()) != 0 {

// The service firewall rules are created based on ServiceSpec.loadBalancerSourceRanges field.

// This currently works for loadbalancers that preserves source ips.

// For loadbalancers which direct traffic to service NodePort, the firewall rules will not apply.

if valid := proxier.ipsetList[kubeLoadbalancerFWSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoadbalancerFWSet].Name))

continue

}

proxier.ipsetList[kubeLoadbalancerFWSet].activeEntries.Insert(entry.String())

allowFromNode := false

for _, src := range svcInfo.LoadBalancerSourceRanges() {

// ipset call

entry = &utilipset.Entry{

IP: ingress,

Port: svcInfo.Port(),

Protocol: protocol,

Net: src,

SetType: utilipset.HashIPPortNet,

}

// enumerate all white list source cidr

if valid := proxier.ipsetList[kubeLoadBalancerSourceCIDRSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoadBalancerSourceCIDRSet].Name))

continue

}

proxier.ipsetList[kubeLoadBalancerSourceCIDRSet].activeEntries.Insert(entry.String())

// ignore error because it has been validated

_, cidr, _ := net.ParseCIDR(src)

if cidr.Contains(proxier.nodeIP) {

allowFromNode = true

}

}

// generally, ip route rule was added to intercept request to loadbalancer vip from the

// loadbalancer's backend hosts. In this case, request will not hit the loadbalancer but loop back directly.

// Need to add the following rule to allow request on host.

if allowFromNode {

entry = &utilipset.Entry{

IP: ingress,

Port: svcInfo.Port(),

Protocol: protocol,

IP2: ingress,

SetType: utilipset.HashIPPortIP,

}

// enumerate all white list source ip

if valid := proxier.ipsetList[kubeLoadBalancerSourceIPSet].validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, proxier.ipsetList[kubeLoadBalancerSourceIPSet].Name))

continue

}

proxier.ipsetList[kubeLoadBalancerSourceIPSet].activeEntries.Insert(entry.String())

}

}

// ipvs call

serv := &utilipvs.VirtualServer{

Address: net.ParseIP(ingress),

Port: uint16(svcInfo.Port()),

Protocol: string(svcInfo.Protocol()),

Scheduler: proxier.ipvsScheduler,

}

if svcInfo.SessionAffinityType() == v1.ServiceAffinityClientIP {

serv.Flags |= utilipvs.FlagPersistent

serv.Timeout = uint32(svcInfo.StickyMaxAgeSeconds())

}

if err := proxier.syncService(svcNameString, serv, true, bindedAddresses); err == nil {

activeIPVSServices[serv.String()] = true

activeBindAddrs[serv.Address.String()] = true

if err := proxier.syncEndpoint(svcName, svcInfo.OnlyNodeLocalEndpoints(), serv); err != nil {

klog.Errorf("Failed to sync endpoint for service: %v, err: %v", serv, err)

}

} else {

klog.Errorf("Failed to sync service: %v, err: %v", serv, err)

}

}

}

if svcInfo.NodePort() != 0 {

if len(nodeAddresses) == 0 || len(nodeIPs) == 0 {

// Skip nodePort configuration since an error occurred when

// computing nodeAddresses or nodeIPs.

continue

}

var lps []utilproxy.LocalPort

for _, address := range nodeAddresses {

lp := utilproxy.LocalPort{

Description: "nodePort for " + svcNameString,

IP: address,

Port: svcInfo.NodePort(),

Protocol: protocol,

}

if utilproxy.IsZeroCIDR(address) {

// Empty IP address means all

lp.IP = ""

lps = append(lps, lp)

// If we encounter a zero CIDR, then there is no point in processing the rest of the addresses.

break

}

lps = append(lps, lp)

}

// For ports on node IPs, open the actual port and hold it.

for _, lp := range lps {

if proxier.portsMap[lp] != nil {

klog.V(4).Infof("Port %s was open before and is still needed", lp.String())

replacementPortsMap[lp] = proxier.portsMap[lp]

// We do not start listening on SCTP ports, according to our agreement in the

// SCTP support KEP

} else if svcInfo.Protocol() != v1.ProtocolSCTP {

socket, err := proxier.portMapper.OpenLocalPort(&lp, isIPv6)

if err != nil {

klog.Errorf("can't open %s, skipping this nodePort: %v", lp.String(), err)

continue

}

if lp.Protocol == "udp" {

conntrack.ClearEntriesForPort(proxier.exec, lp.Port, isIPv6, v1.ProtocolUDP)

}

replacementPortsMap[lp] = socket

} // We're holding the port, so it's OK to install ipvs rules.

}

// Nodeports need SNAT, unless they're local.

// ipset call

var (

nodePortSet *IPSet

entries []*utilipset.Entry

)

switch protocol {

case "tcp":

nodePortSet = proxier.ipsetList[kubeNodePortSetTCP]

entries = []*utilipset.Entry{{

// No need to provide ip info

Port: svcInfo.NodePort(),

Protocol: protocol,

SetType: utilipset.BitmapPort,

}}

case "udp":

nodePortSet = proxier.ipsetList[kubeNodePortSetUDP]

entries = []*utilipset.Entry{{

// No need to provide ip info

Port: svcInfo.NodePort(),

Protocol: protocol,

SetType: utilipset.BitmapPort,

}}

case "sctp":

nodePortSet = proxier.ipsetList[kubeNodePortSetSCTP]

// Since hash ip:port is used for SCTP, all the nodeIPs to be used in the SCTP ipset entries.

entries = []*utilipset.Entry{}

for _, nodeIP := range nodeIPs {

entries = append(entries, &utilipset.Entry{

IP: nodeIP.String(),

Port: svcInfo.NodePort(),

Protocol: protocol,

SetType: utilipset.HashIPPort,

})

}

default:

// It should never hit

klog.Errorf("Unsupported protocol type: %s", protocol)

}

if nodePortSet != nil {

entryInvalidErr := false

for _, entry := range entries {

if valid := nodePortSet.validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, nodePortSet.Name))

entryInvalidErr = true

break

}

nodePortSet.activeEntries.Insert(entry.String())

}

if entryInvalidErr {

continue

}

}

// Add externaltrafficpolicy=local type nodeport entry

if svcInfo.OnlyNodeLocalEndpoints() {

var nodePortLocalSet *IPSet

switch protocol {

case "tcp":

nodePortLocalSet = proxier.ipsetList[kubeNodePortLocalSetTCP]

case "udp":

nodePortLocalSet = proxier.ipsetList[kubeNodePortLocalSetUDP]

case "sctp":

nodePortLocalSet = proxier.ipsetList[kubeNodePortLocalSetSCTP]

default:

// It should never hit

klog.Errorf("Unsupported protocol type: %s", protocol)

}

if nodePortLocalSet != nil {

entryInvalidErr := false

for _, entry := range entries {

if valid := nodePortLocalSet.validateEntry(entry); !valid {

klog.Errorf("%s", fmt.Sprintf(EntryInvalidErr, entry, nodePortLocalSet.Name))

entryInvalidErr = true

break

}

nodePortLocalSet.activeEntries.Insert(entry.String())

}

if entryInvalidErr {

continue

}

}

}

// Build ipvs kernel routes for each node ip address

for _, nodeIP := range nodeIPs {

// ipvs call

serv := &utilipvs.VirtualServer{

Address: nodeIP,

Port: uint16(svcInfo.NodePort()),

Protocol: string(svcInfo.Protocol()),

Scheduler: proxier.ipvsScheduler,

}

if svcInfo.SessionAffinityType() == v1.ServiceAffinityClientIP {

serv.Flags |= utilipvs.FlagPersistent

serv.Timeout = uint32(svcInfo.StickyMaxAgeSeconds())

}

// There is no need to bind Node IP to dummy interface, so set parameter `bindAddr` to `false`.

if err := proxier.syncService(svcNameString, serv, false, bindedAddresses); err == nil {

activeIPVSServices[serv.String()] = true

if err := proxier.syncEndpoint(svcName, svcInfo.OnlyNodeLocalEndpoints(), serv); err != nil {

klog.Errorf("Failed to sync endpoint for service: %v, err: %v", serv, err)

}

} else {

klog.Errorf("Failed to sync service: %v, err: %v", serv, err)

}

}

}

}

其中有两个非常重要的函数 syncService() 于 syncEndpoint() 这两个定义了同步的过程

syncService() 函数表示了增加或删除一个service,如果存在则修改,如果存在则添加

func (proxier *Proxier) syncService(svcName string, vs *utilipvs.VirtualServer, bindAddr bool, bindedAddresses sets.String) error {

appliedVirtualServer, _ := proxier.ipvs.GetVirtualServer(vs)

if appliedVirtualServer == nil || !appliedVirtualServer.Equal(vs) {

if appliedVirtualServer == nil {

// IPVS service is not found, create a new service

klog.V(3).Infof("Adding new service %q %s:%d/%s", svcName, vs.Address, vs.Port, vs.Protocol)

if err := proxier.ipvs.AddVirtualServer(vs); err != nil {

klog.Errorf("Failed to add IPVS service %q: %v", svcName, err)

return err

}

} else {

// IPVS service was changed, update the existing one

// During updates, service VIP will not go down

klog.V(3).Infof("IPVS service %s was changed", svcName)

if err := proxier.ipvs.UpdateVirtualServer(vs); err != nil {

klog.Errorf("Failed to update IPVS service, err:%v", err)

return err

}

}

}

// bind service address to dummy interface

if bindAddr {

// always attempt to bind if bindedAddresses is nil,

// otherwise check if it's already binded and return early

if bindedAddresses != nil && bindedAddresses.Has(vs.Address.String()) {

return nil

}

klog.V(4).Infof("Bind addr %s", vs.Address.String())

_, err := proxier.netlinkHandle.EnsureAddressBind(vs.Address.String(), DefaultDummyDevice)

if err != nil {

klog.Errorf("Failed to bind service address to dummy device %q: %v", svcName, err)

return err

}

}

return nil

}

同理 syncEndpoint() 也是相同的操作

func (proxier *Proxier) syncEndpoint(svcPortName proxy.ServicePortName, onlyNodeLocalEndpoints bool, vs *utilipvs.VirtualServer) error {

appliedVirtualServer, err := proxier.ipvs.GetVirtualServer(vs)

if err != nil || appliedVirtualServer == nil {

klog.Errorf("Failed to get IPVS service, error: %v", err)

return err

}

// curEndpoints represents IPVS destinations listed from current system.

curEndpoints := sets.NewString()

// newEndpoints represents Endpoints watched from API Server.

newEndpoints := sets.NewString()

curDests, err := proxier.ipvs.GetRealServers(appliedVirtualServer)

if err != nil {

klog.Errorf("Failed to list IPVS destinations, error: %v", err)

return err

}

for _, des := range curDests {

curEndpoints.Insert(des.String())

}

endpoints := proxier.endpointsMap[svcPortName]

// Service Topology will not be enabled in the following cases:

// 1. externalTrafficPolicy=Local (mutually exclusive with service topology).

// 2. ServiceTopology is not enabled.

// 3. EndpointSlice is not enabled (service topology depends on endpoint slice

// to get topology information).

if !onlyNodeLocalEndpoints && utilfeature.DefaultFeatureGate.Enabled(features.ServiceTopology) && utilfeature.DefaultFeatureGate.Enabled(features.EndpointSliceProxying) {

endpoints = proxy.FilterTopologyEndpoint(proxier.nodeLabels, proxier.serviceMap[svcPortName].TopologyKeys(), endpoints)

}

for _, epInfo := range endpoints {

if onlyNodeLocalEndpoints && !epInfo.GetIsLocal() {

continue

}

newEndpoints.Insert(epInfo.String())

}

// Create new endpoints

for _, ep := range newEndpoints.List() {

ip, port, err := net.SplitHostPort(ep)

if err != nil {

klog.Errorf("Failed to parse endpoint: %v, error: %v", ep, err)

continue

}

portNum, err := strconv.Atoi(port)

if err != nil {

klog.Errorf("Failed to parse endpoint port %s, error: %v", port, err)

continue

}

newDest := &utilipvs.RealServer{

Address: net.ParseIP(ip),

Port: uint16(portNum),

Weight: 1,

}

if curEndpoints.Has(ep) {

// check if newEndpoint is in gracefulDelete list, if true, delete this ep immediately

uniqueRS := GetUniqueRSName(vs, newDest)

if !proxier.gracefuldeleteManager.InTerminationList(uniqueRS) {

continue

}

klog.V(5).Infof("new ep %q is in graceful delete list", uniqueRS)

err := proxier.gracefuldeleteManager.MoveRSOutofGracefulDeleteList(uniqueRS)

if err != nil {

klog.Errorf("Failed to delete endpoint: %v in gracefulDeleteQueue, error: %v", ep, err)

continue

}

}

err = proxier.ipvs.AddRealServer(appliedVirtualServer, newDest)

if err != nil {

klog.Errorf("Failed to add destination: %v, error: %v", newDest, err)

continue

}

}

// Delete old endpoints

for _, ep := range curEndpoints.Difference(newEndpoints).UnsortedList() {

// if curEndpoint is in gracefulDelete, skip

uniqueRS := vs.String() + "/" + ep

if proxier.gracefuldeleteManager.InTerminationList(uniqueRS) {

continue

}

ip, port, err := net.SplitHostPort(ep)

if err != nil {

klog.Errorf("Failed to parse endpoint: %v, error: %v", ep, err)

continue

}

portNum, err := strconv.Atoi(port)

if err != nil {

klog.Errorf("Failed to parse endpoint port %s, error: %v", port, err)

continue

}

delDest := &utilipvs.RealServer{

Address: net.ParseIP(ip),

Port: uint16(portNum),

}

klog.V(5).Infof("Using graceful delete to delete: %v", uniqueRS)

err = proxier.gracefuldeleteManager.GracefulDeleteRS(appliedVirtualServer, delDest)

if err != nil {

klog.Errorf("Failed to delete destination: %v, error: %v", uniqueRS, err)

continue

}

}

return nil

}

接下来就是同步规则的步骤了,L1544-L1621

step 3:规则的删除

粗略翻到这里可能有一个疑问?没有提到删除,删除时包含在 syncXX() 函数中的

例如在 syncEndpoint() 中会看是否在 终止列表中,如果在跳过,如果不在加入

if curEndpoints.Has(ep) {

// check if newEndpoint is in gracefulDelete list, if true, delete this ep immediately

uniqueRS := GetUniqueRSName(vs, newDest)

if !proxier.gracefuldeleteManager.InTerminationList(uniqueRS) {

continue

}

klog.V(5).Infof("new ep %q is in graceful delete list", uniqueRS)

err := proxier.gracefuldeleteManager.MoveRSOutofGracefulDeleteList(uniqueRS)

if err != nil {

klog.Errorf("Failed to delete endpoint: %v in gracefulDeleteQueue, error: %v", ep, err)

continue

}

}

而 gracefuldeleteManager 是一个 一直运行的协程,在初始化 proxier 时被 Run()

// Run start a goroutine to try to delete rs in the graceful delete rsList with an interval 1 minute

func (m *GracefulTerminationManager) Run() {

go wait.Until(m.tryDeleteRs, rsCheckDeleteInterval, wait.NeverStop)

}

proxier.syncRunner = async.NewBoundedFrequencyRunner("sync-runner", proxier.syncProxyRules, minSyncPeriod, syncPeriod, burstSyncs)

proxier.gracefuldeleteManager.Run()

return proxier, nil

总结

到这里已经清楚的掌握了 kube-proxy 的架构,接下来的会为扩展kubernetes中service架构,以及手撸一个 kube-proxy做准备;本系列第三部分:如何扩展现有的kube-proxy架构

文中的知识都是个人根据理解整理的,如有不对的地方欢迎指出,感谢各位大佬

Reference

本文发布于Cylon的收藏册,转载请著名原文链接~

链接:https://www.oomkill.com/2023/02/ch19-kube-proxy-code/

版权:本作品采用「署名-非商业性使用-相同方式共享 4.0 国际」 许可协议进行许可。